This morning I was sipping my usual espresso before starting the day, peacefully browsing LinkedIn posts, when I came across the nth+1 article authored by a member of the new zoological species called (self-proclaimed) AI expert.

This morning I was sipping my usual espresso before starting the day, peacefully browsing LinkedIn posts, when I came across the nth+1 article authored by a member of the new zoological species called (self-proclaimed) AI expert.

While the guy (some blogger looking for attention) and the topic (consciousness, machines, and whatever-else-the-super-mega-fuck he was thinking he really needed to write) do not matter a ton, two thoughts rapidly fired in my mind.

First off, more than a thought, and emotion: I am a pissed-off espresso-loving Italian who really really enjoys his AM cup without being disturbed by bad stuff, like reading nonsensical AI crap.

Secondly: how can I stop this and prevent worldwide espresso poisoning, while also helping other people, legitimately interested in AI, to rapidly

Brush aside these scammers and focus on what is real AI content?

Relatedly, another issue came to mind:

How can I help employers or enterprise purify the AI Gold from all the crap around it, and the fakes?

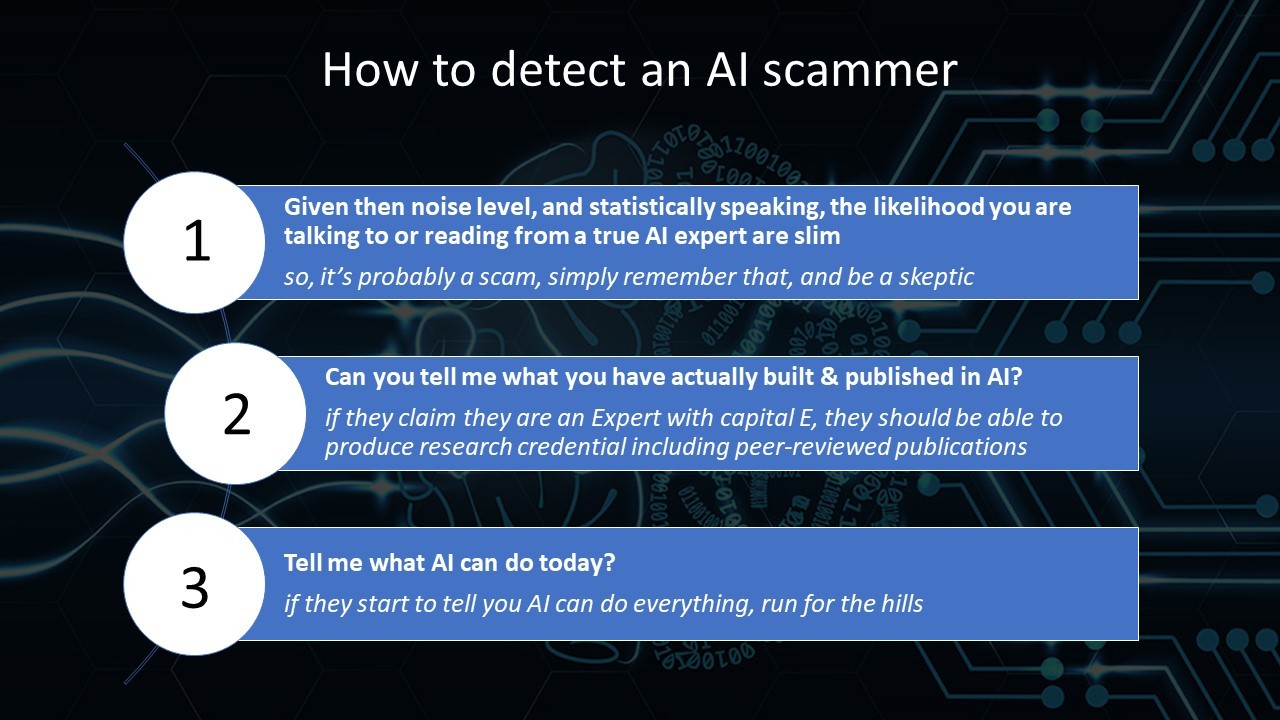

I came up with a three rule short cheat sheet – find it at the end of this post – that you can download, print, and use free of charge.

I came up with a three rule short cheat sheet – find it at the end of this post – that you can download, print, and use free of charge.

You can keep these principles in mind when reading a post by an “AI Expert”, hiring an employee that claims to be one, or, if you are a business owner or in charge of thinking of AI-powered product, interviewing so-called “AI solution providers” that claim to be the last of a long AI Jedi lineage.

This is going to be incomplete, quick and easy, but it’s better to have an AI flu shot than walking around carelessly in what is a AI-viruses infested society.

Though, it was not like that until recently…

The good AI times when things were really bad

I joined the quest for AI out of high school in Italy back when it was neither fashionable (Versace shirts were though…) nor cool to build Neural Networks.

{Yes, the real name of the AI that works today is called Neural Networks}.

It was actually a stigma: I recall, during my PhD in Boston, walking out of MIT one day being told “that Neural Network Voodoo stuff won’t even work, focus on decision trees.” Depressing.

They were trying times, and pure passion for science (and a few teaspoon of stubbornness mixed with craziness) was essential for me and my many AI colleagues to pull ahead despite the criticisms and the patronizing comments. I even recall a program manager from a DoD agency telling me “….look, I like you, and I can even consider this proposal, but please remove all this Neural Network nonsense from the text and then we’ll see”.

They were trying times, and pure passion for science (and a few teaspoon of stubbornness mixed with craziness) was essential for me and my many AI colleagues to pull ahead despite the criticisms and the patronizing comments. I even recall a program manager from a DoD agency telling me “….look, I like you, and I can even consider this proposal, but please remove all this Neural Network nonsense from the text and then we’ll see”.

But things turned around nicely for people who were able to stick to their beliefs and theories. Not so much for MIT, who lost the race on AI and the smarter AI/Robot they spun out was the Roomba that hits tables and chairs like a blindfolded drunk & overweight gerbil. Below is a demonstration of the amazing tech:

…. (in case the video does not play, click here: https://www.youtube.com/watch?v=ASjLXrbVlMw). Digressing here, back to AI.

However, I needed to think twice about what I wished for…

While it’s nice that Neural Networks left the MIT-traditional-AI-theory loving people in the dust, the raise of AI and its awareness has unfortunately vomited on us a plethora of fake “AI experts.”

And, in a sense, has caused people who wanted to be part of the “AI buzz” to lie profusely. Some of the same people that were telling us “this is voodoo manure that will never work” are now Neural Network advocates, along with brand new buckets of people that sprinkle “AI, ML, Deep Learning” in their resume, websites, etc, like it was parsley on a Porcini Mushroom Risotto (I can cook a mean one – parsley goes fresh at the end… yes, profusely).

And, in a sense, has caused people who wanted to be part of the “AI buzz” to lie profusely. Some of the same people that were telling us “this is voodoo manure that will never work” are now Neural Network advocates, along with brand new buckets of people that sprinkle “AI, ML, Deep Learning” in their resume, websites, etc, like it was parsley on a Porcini Mushroom Risotto (I can cook a mean one – parsley goes fresh at the end… yes, profusely).

The resulting new zoological species comes in different shapes an forms, from “AI experts” to “AI programmers” to “AI Engineers” claiming direct lineage from AI Zeus and to have drunk from the fountain of AI knowledge.

So, here is a cheat sheet for you, in the form of a few simple rules.

Let’s start with the easy one:

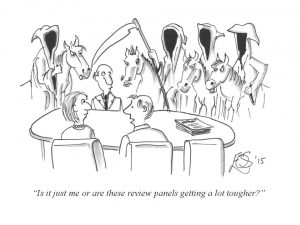

If they start talking about Elon Musk and “AI taking over the world”, or open their mouth and you think after hearing “Elo…” they’ll finish “…n Musk”, run for the door (or show them one). I put a picture below to remind you why:

Rule #0: If they bring out Musk, they are done.

Do the math, for f#@& sake: They are NOT experts

OK, you got rid of the Muskian tail of the Gaussian. Phew!…. let’s get to the real Rule #1.

Now, seriously, let’s just take a moment here and do some math.

There are about 22K PhD-educated AI experts worldwide

(check below if you do not believe me).

Estimate that roughly half of that could truly truly (truly) know what they are doing (let alone being real thought leaders), you are destined to land waaaay below 10K. With a population approaching 8B, the chance that you are talking to an AI expert is truly slim.

So, rule #1, your default is:

Rule #1: Statistically speaking, I am not talking to an expert. I need to be skeptical.

Now, why is talking to a PhD educated expert important? Let’s go to rule #2:

If they claim they are expert, tell them “Show me.”

Now, there are smart guys around, plenty of super smart people and geniuses etc. that have originally not chosen AI as their passion and focus, but now want to get into AI. I know plenty of this guys, and what I say is:

Fine, and great, this is one of the best thing that can happen on Earth today, and should be applauded. We need more people interested in AI! More! More!

The problem relies when these (and their not so-super-sharp cousins that did not drink from the smart river) claim that they are AI experts, and that’s when rule number #2 comes in. Which is really simple. Ask them to show you. Like Morpheus asking Neo if he knew Kung-Fu.

What is the background of the person talking to you? Have they undergone a formal AI education? If so, where, when, and what have they studied?

Ask them to produce publications (papers, book chapters, abstracts) in the AI field they claim they are experts in. This will be a very simple but super indicative acid test.

Why? Publishing a peer-reviewed AI paper requires months or years of hard work, and to undergo the rigorous thought process involved with pitching and being judged by expert peers (and digesting the almost unavoidable revisions and rejections). It’s a mental gym that you want your expert/employee/consultant to have undergone as a conditio sine qua non their output as AI expert is not valid.

Why? Publishing a peer-reviewed AI paper requires months or years of hard work, and to undergo the rigorous thought process involved with pitching and being judged by expert peers (and digesting the almost unavoidable revisions and rejections). It’s a mental gym that you want your expert/employee/consultant to have undergone as a conditio sine qua non their output as AI expert is not valid.

AI is such a broad and deep field. The brain is an immensely complicated machine, and tens of thousands of studies have analyzed, dissected, and attempted to formalize in math its complexity. You want to rely to somebody who knows his AI shit, in particular when you hire a “AI prophet” that needs to tell you where AI is going so that your organization is prepared. I have myself spent 20+ years in the field and I barely scratched the surface…. knowing how every single synapse in your brain works, being able to model it mathematically along with millions of other structures in neurons that do the work, all the way to macro aspects of behavior, not to mention the issue of putting this stuff in silicon… it’s not something you learn on Coursera – a lifetime is not enough. Let’s be real here and cut the crap.

If they have not put years of work – and proved to their peers that they are worthy of their claims – then pass and ignore.

So, to summarize:

Rule #2: If you are an Expert with a capital E, show me your peer-reviewed publications. If you don’t have them, good-bye.

Hey, quick question… what do you think AI can do today?

This is another acid test.

This is another acid test.

While there is a shared believe in particular among stamp-licking Silicon Valley-ist that ‘be naïve, be bold, the less you know about a subject, the better… you will think out of the box and make a fortune’, I invite you to step in Mars-bound rocket with somebody who just told you ‘Hey, I have never piloted a spacecraft before, and that’s why it’s going to work’.

See you.

Now, in my experience, the amount of stuff people think AI can do and is good at and is ready to be deployed into (including the crowd that thinks AI is ready to take over and kill us all) is inversely proportional to how much they truly know about AI.

Simply stated:

True AI expert => “Today’s AI has limitations”

Since she has worked on it, she is aware how hard it is to get to a 100% accuracy working system, and will clearly articulate expectations, limitations, and a ‘roadmap to perfection’ which is usually years ahead of us. But it’s true, and the resulting AI built will do what promised – which most often is still tremendously useful. On the contrary:

AI scam => “I can build you an AI that does everything you want”

So, simply put, the third rule:

Rule #3: If they start to tell you AI can do everything, run for the hills

OK, here are the three rules for you in a printable cheat sheet, as promised:

These rules are not Excalibur, but can help.