Can artificial intelligence work in manufacturing, and what does it take to turn myth into reality?

This article originally appeared on Machine Design. You can view the original here.

We’ve come a long way since the introduction of the steam engine in the 1700s. That invention signaled the start of the first Industrial Revolution, which was followed by the second (electrification) and, more recently, by a third (computerization).

Today, we are on the edge of yet another: the fourth industrial revolution led by automation and artificial intelligence. That said, the rise of the fourth Industrial Revolution has not been without its own set of challenges.

Take the manufacturing industry, for example: There is a strong sense of competitive pressure when it comes to quality. Today, quality control still relies heavily on human inspections. Aside from being an expensive, increasingly scarce commodity, human inspectors are no match for the production rhythms and volumes of industrial machines. In other words, a technician checking compliance to production standards of a fleet of machines outputting thousands of products per day simply cannot keep up—not to mention the risk of human error.

As a result, the majority of manufacturers are turning to emerging technologies such as AI and machine learning to propel them into Industry 4.0. In fact, 79% are using machine learning specifically to help automate tasks, because it is just not conceivable that a human worker can devote the same amount of attention to thousands of products per day compared to the stamina of machine learning solutions.

Moreover, machine learning, and in particular neural networks and deep learning, are prime candidates to be integrated with the existing workflow and processes, as well as human resources, to enable persistent quality control, proactive maintenance, and boost overall quality in an increasingly competitive landscape.

Embracing Industry 4.0 AI (And the Many Ways to Get This Wrong)

No manufacturer wants to be a dinosaur when it comes to embracing the AI revolution. Despite the fact that an estimated 80% of enterprises claim to already be using AI in some form, they “sense” the challenge. In fact, research shows that 91% of companies foresee significant barriers to AI adoption due to a lack of IT infrastructure and a shortage of AI experts.

Very few manufacturers have a clear grasp of what it really takes to effectively embed AI into complex machines and processes. On top of that, they’’re tasked with transforming still heavily human-dependent quality inspection into an AI-powered effort. To help illustrate the many myths and misunderstandings around the adoption of AI in Industry 4.0, we’ll use a concrete example of a hypothetical company, Packaging Global Industries (PGI).

PGI builds a variety of packaging machines sold to companies in order to pack items on an industrial level. While these machines vary according to the type of items in need of packaging (from cosmetics to food, pharmaceutical, gifts, beverages and more), PGI has an overall goal to improve quality control and reduce returns/rejects. After an initial investigation, PGI has launched a corporate-wide initiative aimed at the deployment of AI-powered quality control in its machines.

Following the recent rise in AI awareness, coupled with competitive pressures from other players that are looking into AI, PGI has set an aggressive roadmap of introducing visual-based AI inspections in their new machines.

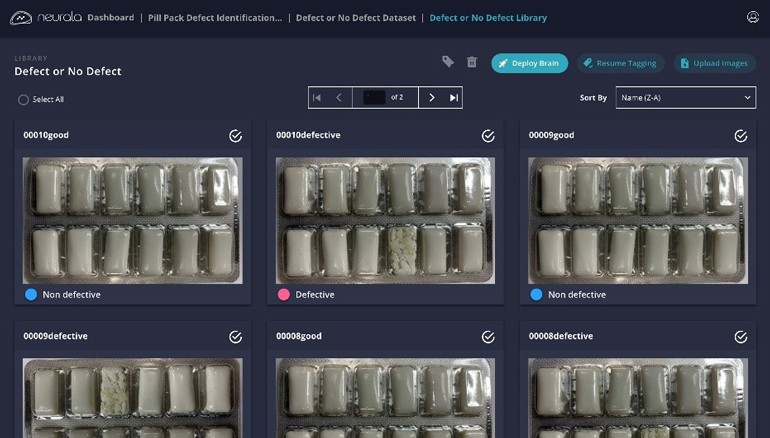

The first step? Collecting pertinent data (mostly, production run images gathered from cameras already installed in their newest machines) to train custom vision AI models.

Myth No. 1: All the data to build custom visual AI for quality control is freely available

The first challenge PGI will face is gathering and preparing data for training custom visual AI models for quality control. Data is imperative to AI: Neural networks and deep learning architectures rely on deriving a function to map input data to output data.

The effectiveness of this mapping function hinges on both the quality and quantity of the data provided. In general, having a larger training set enables more effective features in the network, leading to better performance. In short, large quantities of high-quality data lead to better AI.

But how do companies go about producing and preparing this data? Amassing and labeling (or annotating) is usually the most time-consuming and expensive step in data preparation. This process enables a system to recognize categories or objects of interest in data and defines the appropriate outcome the algorithm should predict once deployed. Oftentimes, internal annotation is the only option for enterprises due to privacy or quality concerns. This might be because data can’t leave the facility or needs extremely accurate tagging from an expert.

PGI will need to amass thousands of images containing healthy/defective parts that need to be labeled. Moreover, there is a sharp imbalance in the data: Most of it contains healthy (non-defective) products, and the company needs to collect more data to achieve the needed balanced image set that training a deep neural network requires. One way to overcome this imbalance is to use a different type of AI system that focuses more on anomaly detection (namely, “this product does not look like good ones”) vs. good/bad products. Once the data is prepared and ready, the second task is to build a proof of concept (PoC) implementation of the AI system, which involves the second challenge/myth.

Myth No. 2: It’s easy to hire AI experts to build an internal AI solution

Despite a plethora of AI tools for developers, AI expertise is hard to find. How hard? There are around 300,000 AI experts worldwide (22,000 Ph.D.-qualified), with the demand for AI talent overshadowing the supply. While there are no technologies able to accelerate training a Ph.D. in AI, there are nascent software frameworks in the market that sidestep the need for in-depth knowledge of the field. Otherwise, both hypothetical and real companies risk waiting a long time to find adequate AI talent.

Myth No. 3: Once a PoC is successful, building a final system is just “a bit more work”

If PGI has done things right until this step and has succeeded in securing internal/external AI resources to design a working PoC, they may assume that they’re only steps away from deploying a feasible solution.

The truth is that AI adoption in a production workflow requires clear success criteria, and a multi-step approach. While the first step is often a PoC, there have been countless that fall short of implementation for reasons that have little to do with AI, and much to do with the right planning. To avoid wasted time and money, organizations need to define clear criteria and timeline in advance to decide whether the tech should go into production. A simple benchmark such as, “If the PoC delivers X at Y functionality, then we’ll launch it here and here by this time,” would go a long way in terms of helping enterprises define an actual deployment scenario.

Myth No. 4: Once I have a successful AI deployed, I don’t need to touch it ever again

Let’s assume PGI gets past all the hurdles above, and successfully implements AI-powered quality control in their industrial machines. Because systems and processes are constantly evolving, there will never be any AI that works off-the-shelf. Rather, customers need the ability to build, customize, and continuously update AI autonomously, without AI expertise.

Successful organizations will understand the operational conditions that AI requires. The right thinking includes considering data storage/management tools, retraining costs/time, and the overall AI lifecycle management tools required to make sure an AI project does not become a mess—or worse, ineffective.

Myth No. 5: AI can just be put on the cloud

Making it through the previous challenges, PGI has one question: where to put the AI solution? It is usually a mistake leaving the topic of AI hardware embodiment as last, as it may taint the choice of the tech stack and solution provider. While persistent and reliable Wi-Fi connection may be a reality for certain use cases, it is often not available for the industrial context: Spotty and unreliable connectivity, coupled with low latency required for AI to operate in machines that process tens of products per second, require edge, local implementations. So, it’s best to think about this first, before having to go back to step 1!

AI is Here to Stay in Industry 4.0

PGI has learned that to effectively implement AI, manufacturing will need a disciplined approach and a mix of internal and external AI resources combining engineering, R&D, and products. PGI’s hypothetical team, like real teams, must work closely in building, testing, and delivering the application. Additionally, teams must oversee future maintenance and iterations.

Fortunately, new tools are enabling more organizations to take on AI adoption. By carefully devising a “myth-free” AI strategy, manufacturing and industrial teams will be entering and ultimately leading the fourth—AI-powered—industrial revolution.